About the client

Grandery is a digital advertising agency with a global reach. Being a data-intensive company, they need advanced solutions to deal with enormous volumes of information.

![[object Object] on the map](https://static.andersenlab.com/andersenlab/new-andersensite/bg-for-blocks/about-the-client/france-desktop-2x.png)

Project overview

Andersen was approached by a digital advertising agency with a global reach. This customer required expert-level assistance to improve the way they were collecting, storing, and processing enormous amounts of heterogeneous ad-related data. Owing to our tech and marketing knowledge, we have made it possible for the customer to obtain a secure and highly-functional data system.

Solution

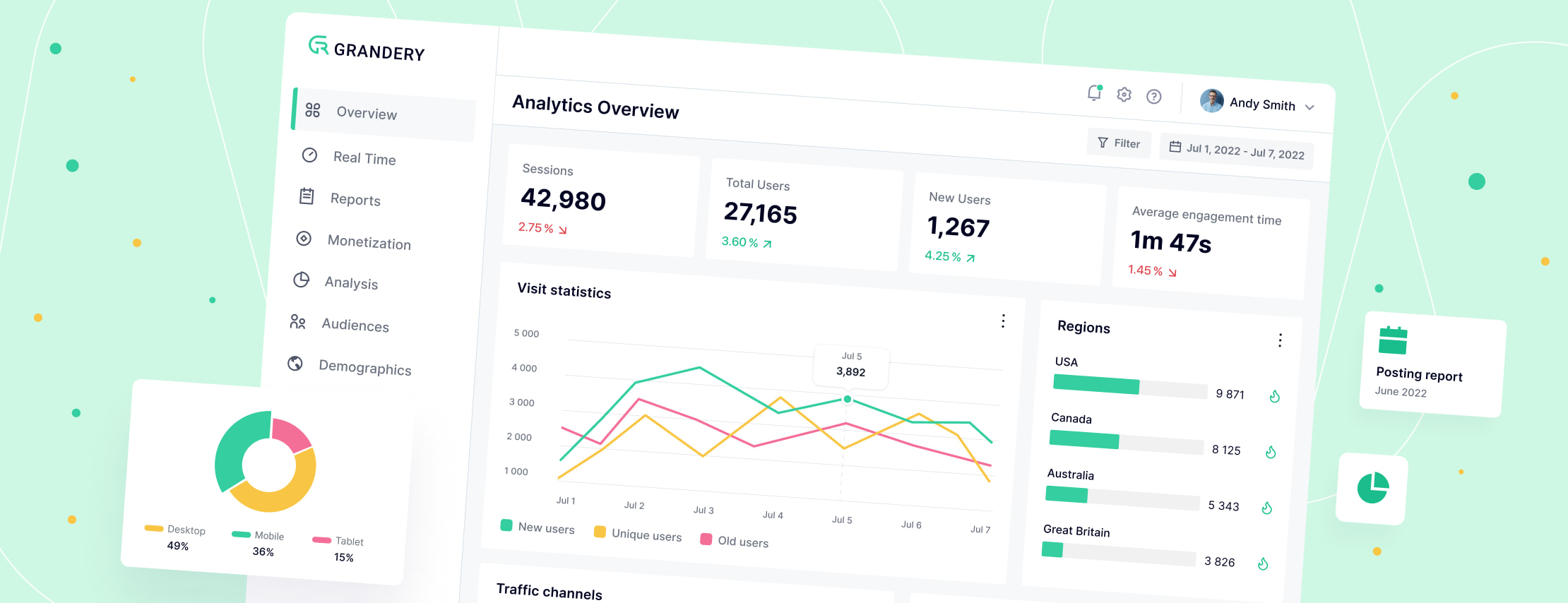

With our contribution, the customer can now use a reliable data lake tailored specifically to the needs of this international advertising agency. That is, the customer's team is able to assess and analyze significant amounts of crucial statistical and analytical information – including impressions, clicks, views, posts, and user surveys – in a well-structured and convenient way.

Among other things, the resulting solution offers the following advantages:

- Its architecture is designed to ensure high performance, unification, ease of support, and vendor-independence. As a result, the customer can now easily transfer their data lake and data pipelines from Azure to other cloud providers – for example, Google or Amazon.

- It has its own unified scheduler and UI engine, unified data pipeline development framework, code generator for such pipelines, and unified data collectors.

- Over 40 data sources are now available, including Google, Facebook, TikTok, and other platforms.

- CRM and website integration allows seamless alignment between campaign data and customer journey insights.

- Conversion tracking and analytics configuration ensure precise measurement of user actions across multiple channels and touchpoints.

- Finally, the data lake/DWH was migrated from an on-premises SQL Server/SSIS environment to a cloud-based platform leveraging Spark on Azure, Databricks, and Snowflake.

Project results

The following contributions by Andersen are of particular importance:

- A code generator for data/ETL pipelines, allowing the customer to save dozens of working hours when they need to link and activate new data sources. The number of associated errors was also significantly reduced.

- Support for new data sources and performance optimization, along with the implementation of CRM, website integration and server-side tracking capabilities. This significantly improved data accuracy, campaign attribution, and visibility into the customer journey.

- A library of ETL pipeline functions, which unifies various pipelines, simplifies the development process, and facilitates support procedures.

Finally, a DCP upload functionality created by one of our specialists processes and validates files uploaded via the Excel system.

Let's talk about your IT needs

What happens next?

An expert contacts you after having analyzed your requirements;

If needed, we sign an NDA to ensure the highest privacy level;

We submit a comprehensive project proposal with estimates, timelines, CVs, etc.

Customers who trust us